Better Data on Graduates’ Earnings Is Coming Soon to a Dashboard Near You. Will It Make a Difference?

About a decade ago, the higher-ed world rallied around the notion of access. Everyone should go to college! Then it was completion. What’s the point of everyone going to college if too many will drop out, saddled with debt? Now a different concept has taken hold: value. How can colleges demonstrate that what they offer is worth the effort and expense?

Attempts to determine the value of a college degree or credential have led to a recent surge of legislative efforts at both the federal and state levels to improve the data available to students on postgraduation earnings. Last month, a bipartisan group of U.S. representatives and senators reintroduced the College Transparency Act, which passed the House last year and would make sweeping changes to the data collected and collated by the U.S. Education Department, and allow for a much more detailed understanding of students’ economic outcomes. Several states, including Colorado, Ohio, and Texas, are attempting to improve their outcomes data, too.

After years of frustration and false starts, governments and college leaders may be on the cusp of being able to quantify the value of a degree or credential at a granular level — right down to a particular program at a certain institution, complete with details about student demographics.

While everyone has been figuring out how to improve earnings data, another question has gone less examined: Who is actually using these analyses?

Determining the return on investment, or ROI, of a college degree would seem to be in the interests of both the general public and of individual students and their families. The billions of federal and state dollars spent on student aid and public-college support, not to mention the best interests of the country and the health of its economy, mean that it makes sense for elected officials to make sure that those students and taxpayers are getting their money’s worth. Skepticism about the value of a degree is one reason colleges would want to make a convincing case for themselves. A survey conducted in 2021 by the Bipartisan Policy Center and the American Association of Colleges and Universities found that 60 percent of American adults thought a college degree was worth the time and expense, though only 51 percent of respondents with only a high-school education thought so. And for college freshmen, being able to get a better job was, at 81 percent the reason that was most likely to be cited as “very important” in their decision to go to college, according to the Higher Education Research Institute at the University of California at Los Angeles.

The federal government has this data all over the place. We use it to chase deadbeat dads and criminals and spies.

But as data-improvement efforts ramp up, “we have very little sense that students are using this information,” says Zachary Bleemer, an assistant professor of economics at Yale University and a research associate at the Center for Studies in Higher Education at the University of California at Berkeley. “It’s sort of an interesting question who these data are for.”

The current thirst for earnings data and information on a student’s return on investment may have another, more-surprising origin, says David Baime, senior vice president for government relations at the American Association of Community Colleges: the federal gainful-employment rule. The rule, which was introduced by the Education Department under President Barack Obama in 2013, made academic programs ineligible for federal funding if their students’ annual loan payments were greater than a certain percentage of their income.

While enforcement of the rule was suspended by Betsy DeVos, secretary of education under President Donald J. Trump, the gainful-employment rule “was a really important development,” Baime says. In addition to trying to hold ineffective programs and their host institutions accountable, he adds, it gathered and collated wage data and “in a very prominent way, articulated and emphasized the concept of linking debt to what people earn.” The Biden administration is reportedly working on a new iteration of the rule.

The federal government has been collecting detailed earnings data for nearly a century. When President Franklin D. Roosevelt signed federal unemployment insurance into law as part of the New Deal in the 1930s, the newly formed Social Security Administration began gathering wage records of almost every worker in the country in order to calculate benefits. Officials have been finding uses for it ever since. “The federal government has this data all over the place,” says Anthony P. Carnevale, director of the Center on Education and the Workforce at Georgetown University. “We use it to chase deadbeat dads and criminals and spies” and other individuals who might want to obfuscate their source of income.

And then there is the trove of education records that follow students throughout their academic careers — from kindergarten through high-school graduation, and often into a public college. Connecting all those data points with work-force outcomes could yield valuable insights into how, and possibly why, some students fare better than others.

The federal government does not use such data to track individually identifiable Americans through their educational and work lives — a so-called unit record. In fact, it’s against the law to do so.

Another Obama-era innovation, the College Scorecard, created in 2015 a public-facing online dashboard meant to provide students and families with specific information about colleges, their costs of attendance, and the likely economic outcomes for students. The dashboard uses wage information from the Education Department’s National Student Loan Data System and the Internal Revenue Service. For example, the median earnings for English majors enrolled four years ago at Grinnell College, as determined by the college submitting their de-identified Social Security numbers, is $49,405. With the salary information associated with the 12 of those students who took out federal student loans at the college, the Scorecard reveals median monthly earnings of $4,117 and a median loan payment of $186.

But beyond its user-friendly interface, the Scorecard quickly bumps into substantial limits. The data is drawn exclusively from students who receive federal grants or take out federal student loans, which leaves out about 30 percent of college students overall. Unless it specifies otherwise, the data includes any eligible students who enrolled in but didn’t complete the programs, which could considerably skew the resulting wage information. The federal data is also weak on details. None of the results are segmented by occupation within a major — the same Grinnell English graduates mentioned above could be working at book-publishing houses or as baristas, and their salaries go into the same pool for averaging. Nor is the data segmented by demographics such as gender or race.

The reintroduced College Transparency Act could change much of that. The bill would call for a new level of information gathering and cooperation between federal agencies in order to give students and families a clearer picture of what the costs and benefits of college entail. If passed, the bill won’t necessarily mean the creation of student unit records, but “it’ll literally double the volume of the data that will be collected,” Carnevale says. “There will be transparency for all degrees on graduation, and economic outcomes, and there will be accountability for job training.”

The act could have other, less-direct effects. Proposed college-aid improvements, such as free community college at the national level, as previously advanced by the Biden administration, or expanding Pell Grants to cover noncredit work-force credentials, could hinge on better data about outcomes for such programs, Carnevale adds. Work-force-training programs, which typically collect little or no data in most states and almost none at the federal level, especially need good outcomes data, he says, otherwise “you train people for a lot of jobs that don’t exist.”

U.S. Rep. Virginia Foxx, the North Carolina Republican who chairs the House Committee on Education and the Workforce, disagrees with the extent of the data that the act would require collecting. Good data is important to improve transparency for students, but the College Transparency Act “goes about data collection the wrong way, empowering bureaucrats to invade the privacy of students even when they choose not to take federal financial aid,” she wrote in a statement to The Chronicle. “There is already substantial data available on the College Scorecard and at the state level.”

Carnevale, who worked for years as a Congressional and White House staff member before coming to academe, believes that resistance to the act, or some sweeping accountability measure like it, is futile. “This is coming like a truck,” he says, “and higher ed is trying not to see it.”

States have their own reasons for wanting to measure the monetary value of a degree. Many states have established specific goals for increasing the number of their citizens with a postsecondary credential, to help fill jobs that demand one. On the way to chasing those goals over the past decade, officials in some states began to wonder whether they were after the right target.

Texas, for example, adopted a strategic plan in 2015 called 60x30TX. The name telegraphed its key metric: Sixty percent of Texans ages 25 to 34 should have a degree or certificate by 2030, a more than 20-percentage-point increase from the 38 percent who held one in 2013. The issue with these state attainment goals, says Harrison Keller, the state’s commissioner of higher education, is that they’re generic — they’re more about counting credentials than making sure citizens have a degree or certificate that will put them on the path to a better life.

When Keller became commissioner in late 2019, he worked with other leaders across the state to start updating the strategic plan. And at the heart of the new plan lay a new way of looking at credentials. “We don’t just want to create more credentials, and count more credentials, and claim that we’re making progress,” Keller says. “We only want to count credentials that are of value to individuals in Texas and to our Texas employers.” Deciding which credentials are of value entails determining which lead to good jobs and which don’t, which means tracking student outcomes and ROI.

Texas already has a fairly extensive internal educational-data system. It includes wage data from across the state going back for a decade, which can be integrated with information from the state’s primary and secondary schools, public colleges, and work force. The goal is to determine how the wages of a Texan with a high-school diploma will compare with those of someone who earned a postsecondary credential. “If you have a credential that doesn’t actually translate into a wage premium — and that wage premium has to be enough to offset the typical cost when you’re done,” Keller says,“ we don’t want to count that credential.”

The Texas data also holds promise that the federal data doesn’t. It’s already segmented by race and gender, and can be updated often. Keller says some college presidents have even suggested to him that they’d get the most out of the data if they could move from an annual reporting schedule to a quarterly one, for results that are more real time. The Texas data set also has its limits. It covers only students enrolled at public colleges, not those at private institutions. And without the reach of federal tax information, if students move out of state, they drop out of the data set.

Some officials in other states are wary of the tight focus on earnings and ROI, even as such efforts gather momentum. In 2020, Colorado enacted a law requiring the state department of higher education to collect the data necessary to compile an annual report on ROI for public-college students. While it’s generally a good thing to provide this information for students, says Landon K. Pirius, vice chancellor for academic and student affairs at the Colorado Community College system, he worries that, for example, it may tip students toward prioritizing higher-paying majors and away from majors that might reward them, or society, in other ways. “Where is the value of some of those careers out there that society needs but maybe don’t have a high wage?” he says. “I think about teachers, I think about early-childhood educators, social workers. Those are needed by our society yet, unfortunately, there’s not a lot of pay.”

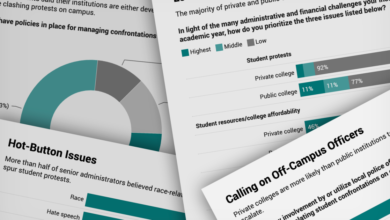

Since the College Scorecard officially rolled out in September 2015, only one study has examined whether its earnings data influenced student decision making. In 2016,researchers at Georgia State University and the College Board found that each 10-percent increase in reported earnings at a particular college related to a 2-percent increase in potential students sending their test scores to that institution. But they could find no clear evidence of shifts in enrollment because of the data. They also wrote that the students who were most likely to act on Scorecard data were white and relatively affluent, “the subgroups of students expected to enter the college search process with the most information and most cultural capital.”

The College Transparency Act “goes about data collection the wrong way, empowering bureaucrats to invade the privacy of students even when they choose not to take federal financial aid.”

It shouldn’t be surprising that wealthier students might pay more attention to the Scorecard, says Michelle Dimino, deputy director of education at Third Way, a policy group that advocates for center-left ideas, according to its website. “Students who could most benefit from newly available data on college outcomes often have the least access to it,” she writes in an email. “Simply providing more information has little tangible effect on behavior if it isn’t paired with some level of personalization or active engagement from the recipient.”

It’s not certain, however, that when outcomes data is presented in a more-personalized fashion, doing so improves its effectiveness. Deciding on a college and then a major is a complicated and sometimes yearslong process for many students. Bleemer, the Yale professor, cites research done in the 2010s by Matthew Wiswall, a professor of economics at the University of Wisconsin at Madison, and Basit Zafar, a professor of economics at the University of Michigan at Ann Arbor. The professors presented information sessions to undergraduates about the economic returns of college majors, and then evaluated how that information did or didn’t alter the students’ attitudes about graduating in particular majors. The data had minimal effect at best. “People are extremely insensitive to this information” Bleemer says. “Even when you really go out of your way to try to convince them.”

So the data could be better, but so could the understanding of whether the data actually matters — or could be made to matter — to the students it’s meant to help. Bleemer thinks he knows why there’s so little research about the effectiveness of outcomes data. It would be relatively easy to figure out who’s accessing the College Scorecard, or the new Texas student-facing data site that’s under construction. But it’s far harder to follow up with them a year, or two years, or five, or 10 years-or-more later to find out where they went to college, how they made the decision to go there, what they studied, and how they’re doing now.

The U.S. Education Department is aware that the College Scorecard’s usage numbers “are not necessarily where we’d want them to be,” says a department official who spoke on the condition that he not be named. But the Scorecard data is designed to be used easily and widely beyond its bounds. The site is built with a so-called open-application-programming interface so that users and other developers can access the data for their own purposes. Scorecard data ends up being used in many other search tools and rankings, says Dimino of Third Way, among them her organization’s Economic Mobility Index. Students using Google to look up a prospective institution may be accessing federal data without even knowing it, and with no way of that being tracked.

So who can make the most obvious use of this potential new flood of data? Well, it can be used by elected officials to target funding and by watchdogs to call out underperforming programs. “While the rhetoric around these policies seems to be directed at students as consumers,” Bleemer says, “my sense is that the primary users, and the way in which these data can be most impactful, is by policymakers and reporters.”

Source link